COMPUTER GAMING & SIMULATIONS

Although simulations generally require a greater amount of realism than computer games and therefore must have significantly more hardware supporting them, the Morsys algorithm can offer solutions for the challenges that both gaming and simulators face. While the advantages of this technology are expressed below in terms of projecting images on screens, they are also applicable to any other form of image projection, including through virtual reality goggles or holographic projection.

Both games and simulations strive to offer an experience as realistic as possible within the limitations of the hardware of whatever system they’re intended to run on. Designers consider the trade-offs of how many sprites can be active on a screen, whether to use 3D or pseudo-3D data, how to use placed objects, what precision of collision detection is required, how to transition between closed and open environments and—generally after all the other decisions have been made—how much processing power remains to handle texturing.

Efficiency

The efficiencies of the Morsys algorithm have been detailed in previous sections of this document, so they will not be reiterated here except for their impact on gaming and simulations.

When comparing the computer games from the ’80s, ’90s and 2000s, it’s obvious that huge strides have been made in computing hardware, allowing games to exist today that would have been the purest speculative science fiction in the early days of personal computing. The same is true of simulators used by police, the military, medical schools and others. CPUs continue to evolve; GPUs were invented and have also evolved; the speed and capacity of RAM and disk storage have steadily increased. The processes and methods used for storing, processing and displaying graphics continue to be refined, but this last piece, the software, has been responsible for significantly smaller overall gains than the hardware advances have. Over the course of a year, CPUs might double their speed, and GPUs might become 50% faster (both of these primarily due to on-chip parallel processing); but the methods, processes and algorithms used to feed the data into, through and out of the hardware systems might show speed gains of just a few percentage points.

Software remains the bottleneck; because of this, volumetric data is still underutilized in gaming and simulations.

The Morsys algorithm represents the first major revolution in volumetric data processing in decades and will allow gaming and simulations to take full advantage of volumetric data to significantly advance their realism.

Collision Detection

As noted on the Morsys overview page, it is important for a computer to know when two objects have collided. It allows the system to answer a myriad of questions that are essential to moving the scenario forward: Did the bullet hit the target? Did the player character’s hands catch the baseball? Did the surgeon’s scalpel nick the artery? Did the airplane’s wing clip the control tower? Was the river’s flow stopped by a dam?

Current games simulations use approximate collision detection frames that are simpler than the objects they are bounding to evaluate whether two objects have collided. The Morsys algorithm allows a 1:1 correlation between an object’s shape and its collision detection frame, because there is no longer the need for a frame. Game and simulation designers will no longer have to consider the trade-offs between the level of collision-detection precision that’s needed, and the amount of processing power that needs to be devoted to managing that precision, providing they are using volumetric data. No longer will a near-miss be counted as a miss or a near-hit as a hit. No longer will a player character end up with a head or foot stuck through a wall, tree or rock due to the computer’s failure to accurately detect a collision.

Progressive Rendering & Multiresolution (Goodbye Load Screens)

As current methods do not allow volumetric data to be progressively rendered, new data sets must be loaded when a new area is entered in a game or simulation.

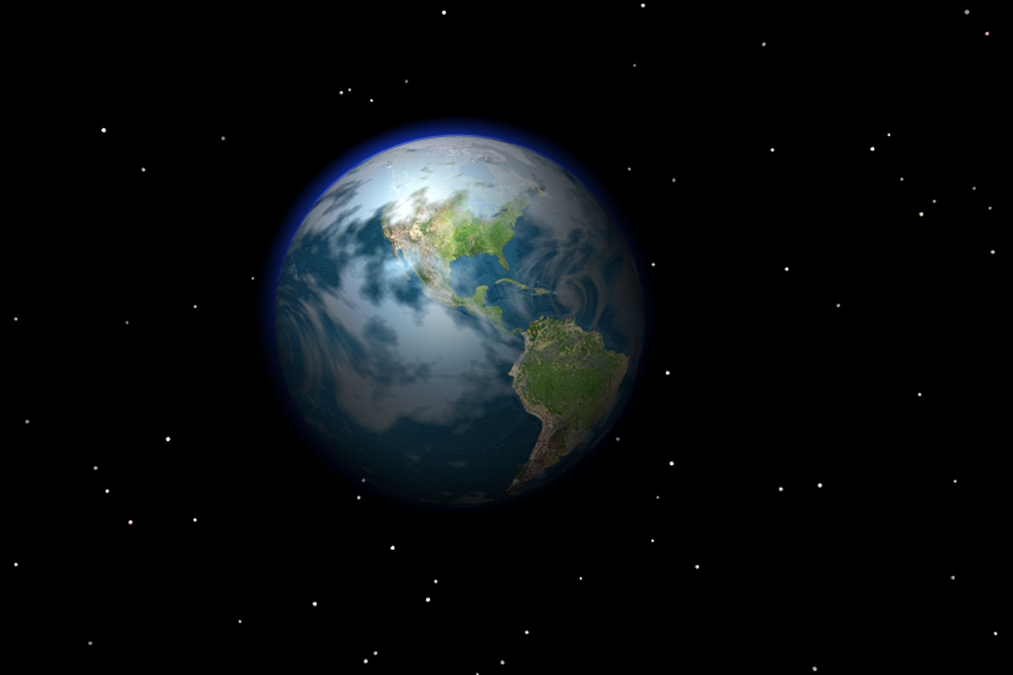

Take for example a game of space exploration, where a player flies her starship between planets to explore them for experience and loot. As the player looks out from the cockpit of her starship, it’s most likely that the stars, planets and asteroids she sees are 2D objects, with the possible exception of some nearby asteroids if the starship is able to interact (e.g., land on or crash into) them. And even then, those asteroids are pseudo-3D at best, but more on that later. If the player chooses a planet on which to land her starship, the game pauses so the new area can load, and then only loads one zone of the planet. If the player moves her character out of this preloaded zone, or from an open to a closed environment within the zone, there is another pause while the new area is loaded.

The progressive rendering and multiresolution capabilities of the Morsys algorithm allow a single volumetric data set to be used during a gaming or simulation experience, and the system will calculate and display only the necessary parts of that data set, and only display them the resolution level that’s needed.

In the same space-exploration scenario, the player would be flying her starship through space and everything she sees would have the capability of being displayed at whatever level of detail available in the data set, but they would actually be displayed at whatever level of detail was needed at the moment. A planet a few hundred thousand kilometers away would be rendered as a dim dot using only a few hundred tetrahedra, but, as the starship neared the planet, the image would resolve to marble size ► baseball size ► have visible weather patterns and continents ► fill the cockpit screen ► all the way through landing in a valley where grasses, trees, water and animals could be seen. All those data were present in the data set when the planet was a speck in space and were only resolved and rendered as needed. The dataset of the planet would be progressively decomposed during the player’s approach, until millions of tetrahedra are used to represent the landing area, and only a few hundred are used to represent those parts of the planet the player isn’t viewing at the moment.

By the same token, the asteroid near the starting point of the player’s voyage is now invisible and is essentially ignored by the Morsys algorithm, and those data are handled at the same ultra-low resolution as the unviewed parts of the planet where the player’s starship landed.

Environments

The advantages of using volumetric data for gaming and simulation environments are some of the most exciting aspects of implementing the Morsys algorithm in these industries.

Surfaces With Substance Behind Them: Most games today use pseudo-3D shells to imply a 3D environment, with limited exceptions for specific areas within some games. Some simulations, such as surgical simulations, are more likely to use truly volumetric data, but even then they have to severely limit the size of the data set due to the inefficiency of current data-manipulation methods.

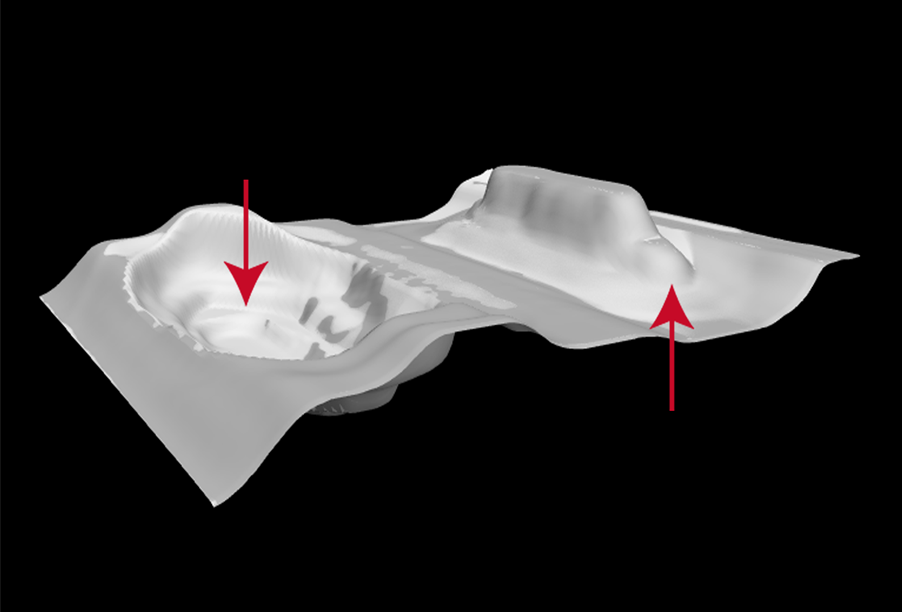

If the top layer of pixels were to be peeled off a hillside in a World of Warcraft scene, for example, the hill would be seen to actually be a bump in a single-layered sheet of pixels, with only empty space between one side of the hill and the single layer of pixels making up the other side. The figure below is a simple illustration of how a single sheet of pixels can be manipulated to give the illusion that volumetric space is being displayed. This illusion is immediately shattered if a supposedly solid object is ever viewed from the inside.

Figure 5 : A Pseudo-3D Sheet of Pixels

This reliance on pseudo-3D environments means that players have limited options when interacting with those environments. Except for certain “placed objects”—a rock sitting in a field or a hut sitting on a beach—which have been placed as destructible objects, players have no ability to really impact their environment because that environment is largely a sham. Other placed objects may not be marked as destructible by the game designers, which results in unrealistic situations, such as a speeding M1 Abrams tank being stopped cold by a sapling five centimeters thick, or a wall of a canvas tent stopping a bullet. And yet other items on the screen that appear to be objects may not be objects at all but are textures, such as are often used for grasses in a field or the canopy of a rainforest, and have no impact whatsoever on gameplay.

The use of volumetric data in games and simulation, which the Morsys algorithm will allow, opens new vistas for realism and styles of games and gameplay.

Imagine a game world that was constructed with volumetric data and was running using the Morsys algorithm. Each tetrahedron from the data set is capable of containing virtually any number of associated data points, such as density, heat, material type, weight, fluidity, or flammability. When combined with a good physics engine, players would be able to interact with their environment in almost any way they could imagine. A player could decide to tunnel into the side of a hill, and the game would cause the roof of the tunnel to collapse if it was not properly shored up as dictated by the rules built into the physics engine. Another player could redirect the flow of a stream simply by creating a different path of least resistance for the water, even if the game designers had not thought of this as a possibility.

Trees could be cut down and used to build a house. Seismic events could wreak permanent changes on the landscape, and fires could be allowed behave as fires should. Actions on one side of the world could impact weather patterns on the other, if the game designers decided to add weather data to the game’s data set. All of these possibilities and innumerable others flow from using volumetric data to build the environment, and associating certain values with the shape data.

As a side note, creating a multiplayer game where players were able to impact the game environment in ways the developers may not have intended or even considered, is simultaneously exciting and scary. For massively multiplayer online role-playing games, the issue of how to handle “griefing” would become significantly more problematic than it already is, but the game would also appeal to the creative side of gamers in a way no game ever has.

No More Differentiation Between Open and Closed Environments: Current games and simulations have to use different graphics engines to handle open (standing in a field under the open sky) and closed (in a cave) environments. This is due to the limitation of current methods in pseudo-3D environments, of not being able to have any part of the environment overhang any other part of the environment. Sometimes games fake an open environment that is actually closed, so it appears a player character can transition between indoor and outdoor environments without loading a new graphics engine, but it’s just a visual trick.

One of the advantages of volumetric data is that the concept of open versus closed environments loses its distinction—it’s all the same environment with a truly volumetric data set.

No More Counting Sprites: For the same technical reasons why there is no longer a difference between open and closed environments with volumetric data, the concept of screen sprites also loses its meaning when volumetric data sets are used. Sprites, also known as “BOBs,” “BLOBs,” “Billboards” or “Imposters,” are partially transparent 2D animations that are mapped onto a pseudo-3D scene.

It takes many processor cycles to track the movements and collisions of sprites, so one of the trade-offs game designers currently have to consider is how many sprites they will allow, the impact that decision will have on the other parts of designers’ formula, and the final impact on the minimum hardware specs required to run the game.

A game or simulation built using truly volumetric data will make the sprite issue obsolete, as they will no longer exist as a concept. With such a game, moving objects, stationary objects and the environment will all be part of the same data set and will interact with each other using the same rule set. No longer will there need to be terrain (a field), a placed object (a rock sitting in the middle of said field), and sprites (a tractor moving across the field, a farmer sitting on the tractor, a dog following the farmer, and the crows flying overhead).

Moving objects within a volumetric data set will still take more processing power than stationary objects since there is further decomposition, recomposition, texturing and so forth associated with displaying a moving object from a volumetric data set. But the impact is minor compared to handling sprites in a pseudo-3D environment where they take up CPU and GPU cycles that are hugely disproportionate to the actual amount of influence they have on the image on the screen.